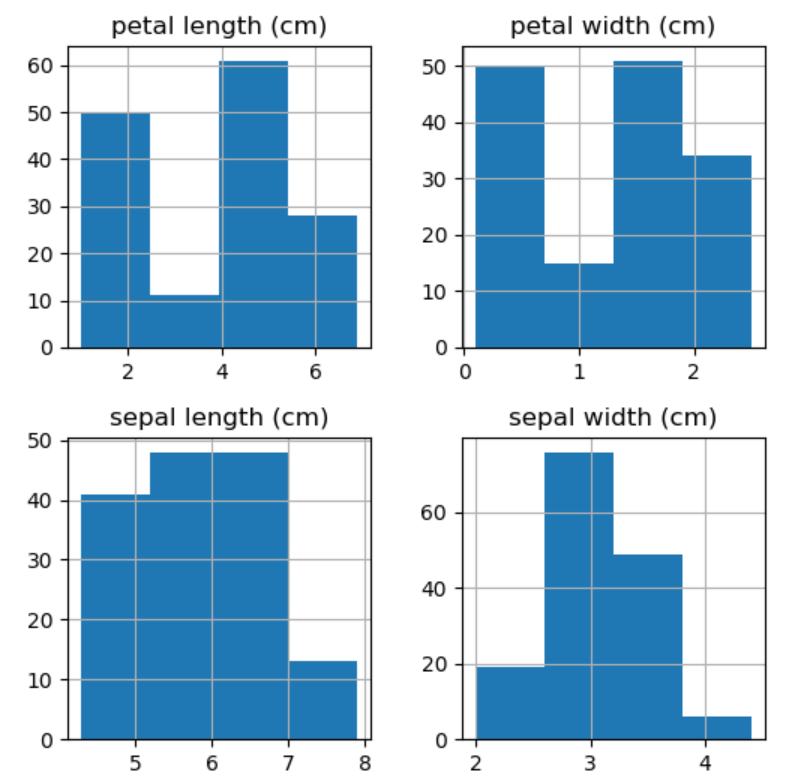

The results are shown in the following image: The following code implements the entropy(s) formula and calculates it on the same vector: Let’s repeat the calculation in Python next. The result of 0.88 indicates the split is nowhere near pure. Image 5 - Entropy calculation (image by author) The entropy calculation is as simple as it can be from this point (rounded to five decimal points): Image 4 - Class distribution summary (image by author) To summarize, zeros and ones are the class labels with the following counts: Image 3 - Entropy input (image by author) Imagine you want to calculate the purity of the following vector: Image 2 - Entropy formula (image by author)Īs you can see, it’s a relatively simple equation, so let’s see it in action. Its value ranges from 0 (pure) and 1 (impure). As mentioned earlier, it measures a purity of a split at a node level. You’ll only have to implement two formulas for the learning part - entropy and information gain. Math Behind Decision Treesĭecision trees represent much more of a coding challenge than a mathematical one. Let’s talk about the math behind the algorithm in the next section. Once the leaf node is reached, the most common value is returned.Īnd that’s it for the basic theory and intuition behind decision trees. We can check for the traversal direction (left or right) based on the input data and learned thresholds at each node. Once the tree is built, we can make predictions for unseen data by recursively traversing the tree. Both will be discussed later upon implementation. The most common ones are maximum depth and minimum samples at the node. The recursion process could go on forever, so we’ll have to specify some exit conditions manually. In this way, the tree is built recursively. The algorithm then performs a greedy search - goes over all input features and their unique values, calculates information gain for every combination, and saves the best split feature and threshold for every node. We’ll discuss the formula and calculations later, but please remember that the higher the gain is, the better the decision split is. Put simply, it represents an average of all entropy values based on a specific split.

Then, for every single split, the Information gain metric is calculated. To begin training the decision tree classifier, we have to determine the root node. The variable with the lowest entropy is then used as a root node. We’ll discuss the formula and the calculations later, but you should remember that the entropy value ranges from 0 (best) to 1 (worst). To further decide which of the impure features is most pure, we can use the Entropy metric. If none of the features alone is 100% correct in the classification, we can consider these features impure. In a nutshell, we need to check how every input feature classifies the target variable independently. So, how do we determine the root node? How to determine the root node Leaf nodes- final nodes at which the prediction is madeĭepending on the dataset size (both in rows and columns), there are probably thousands to millions of ways the nodes and their conditions can be arranged.These nodes have arrows pointing to them and away from them Decision nodes- nodes where the variables are evaluated.It contains a feature that best splits the data (a single feature that alone classifies the target variable most accurately) Root node- node at the top of the tree.Image 1 - Example decision tree representation with node types (image by author)Īs you can see, there are multiple types of nodes: Let’s take a look at an example decision tree first:

You’ll get a crash course in recursion in a couple of minutes, so don’t sweat it if you’re a bit rusty on the topic. If you decide to follow along, the term recursion shouldn’t feel like a foreign language, as the algorithm is based on this concept. We’ll discuss different types of nodes in a bit. The from-scratch implementation will take you some time to fully understand, but the intuition behind the algorithm is quite simple.ĭecision trees are constructed from only two elements - nodes and branches. Introduction to Decision Treesĭecision trees are a non-parametric model used for both regression and classification tasks. You can download the corresponding notebook here. The links to the previous articles are located at the end of this piece. This is the fifth of many upcoming from-scratch articles, so stay tuned to the blog if you want to learn more. After reading, you’ll know how to implement a decision tree classifier entirely from scratch. Machine Learning can be easy and intuitive - here’s a complete from-scratch guide to Decision Treesĭecision trees are one of the most intuitive machine learning algorithms used both for classification and regression.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed